Despite obvious operational benefits, modern architectures based on cloud infrastructure and microservices tend to take control away from developers and make it more painful to work locally.

Testcontainers is an open source framework for providing throwaway, lightweight instances of databases, message brokers, web browsers, or just about anything that can run in a Docker container. This allows developers to write and run unit tests with real dependencies. The libraries are also increasingly used at dev-time by defining local dependencies as code. Overall, Testcontainers libraries transform how teams create software and empower developers to iterate faster and ship with confidence.

For this report, we surveyed the Testcontainers community to understand the state of local development and testing in 2023. Continue reading for seven key insights and trends.

1

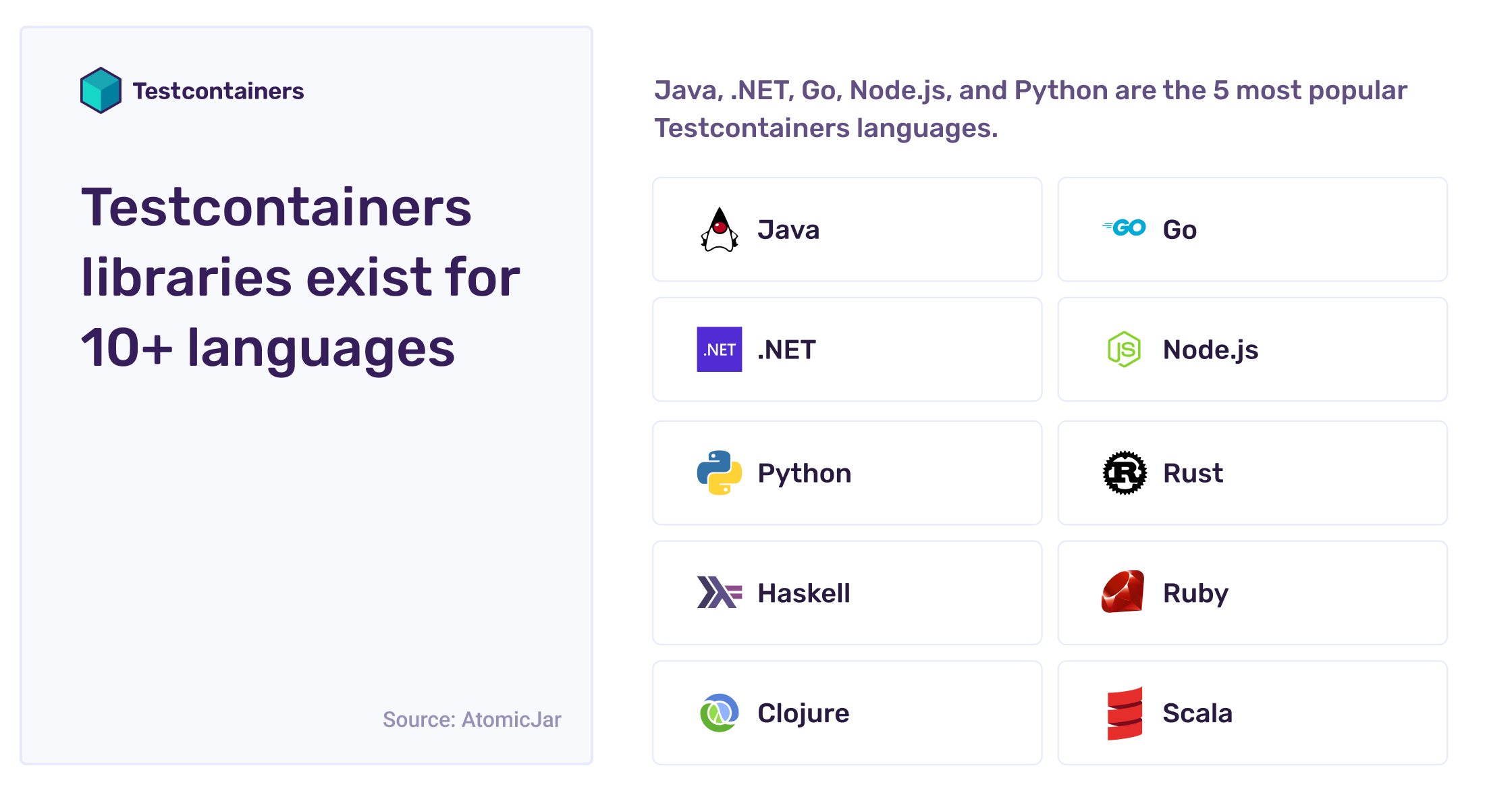

Testcontainers libraries exist for 10+ languages

There are now more than ten Testcontainers languages. Java, .NET, Go, Node.js, and Python are the five most popular Testcontainers languages. 80% of the community uses Testcontainers Java to some extent and 30% has experience with at least another language.

Developers represent 95% of the Testcontainers community. Users range from solo freelancers, small and medium businesses, to large enterprises – with a third of respondents working at companies with 1,000+ employees.

The community is still young, and adoption is growing rapidly. 30% of respondents are in their first year of working with Testcontainers, including 10% getting started only within the past few weeks! 40% of respondents are early evangelists, while the other 60% have already spread Testcontainers usage to their whole team or to several teams.

Check out testcontainers.com to get started, and join the public slack to help grow the community for your favorite Testcontainers language!

2

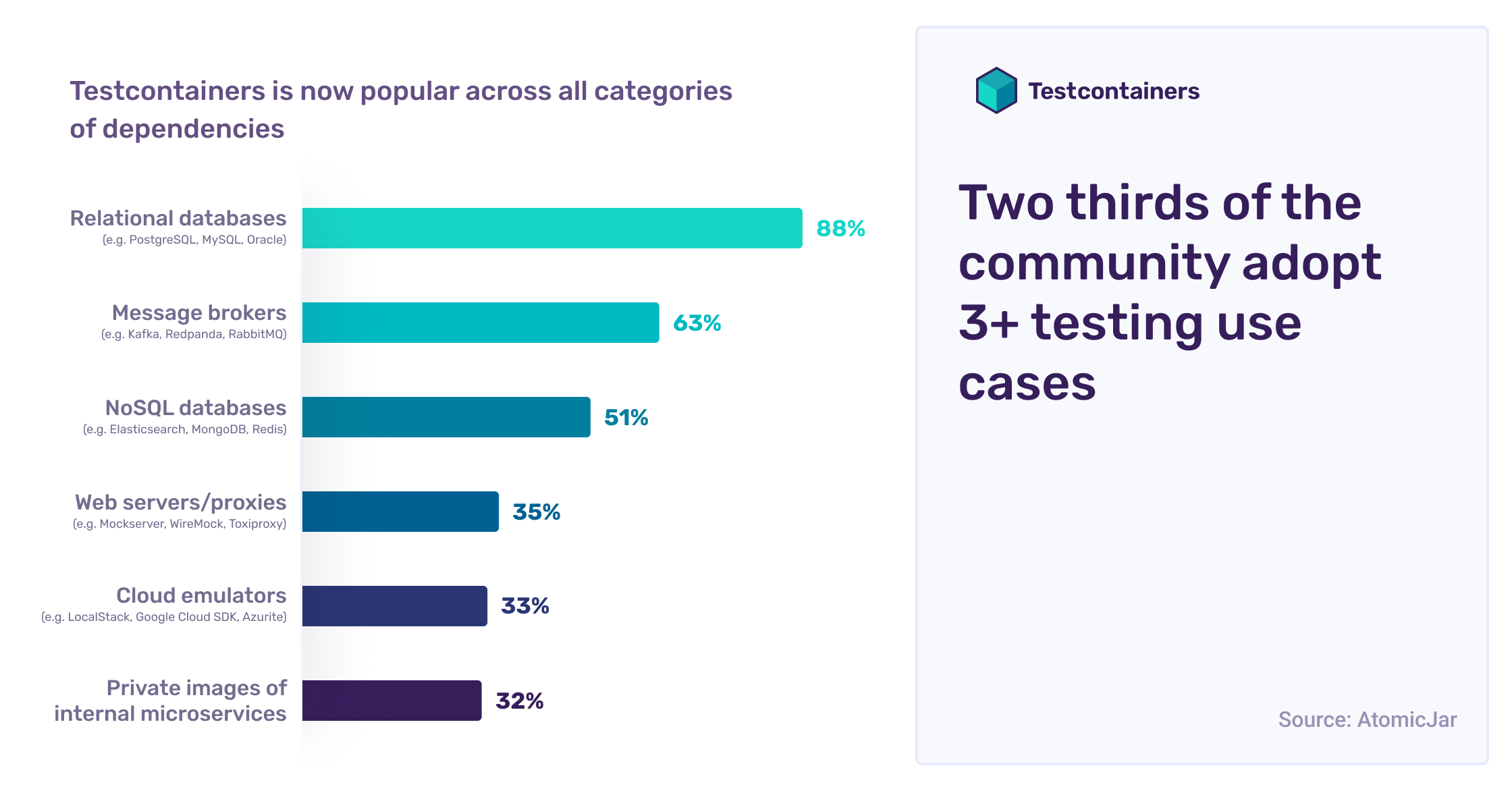

Two thirds of the community adopt 3+ testing use cases

Testcontainers is now popular across all categories of dependencies. Nine out of ten users test with relational databases (e.g. PostgreSQL, MySQL, Oracle), and nearly two-thirds test with a message broker (e.g. Kafka, Redpanda, RabbitMQ). Impressively, two-thirds of the community has already adopted Testcontainers for at least three different use cases!

Many unusual and fascinating use cases exist besides the mainstream ones above. Some users have reported testing infrastructure services (e.g. SFTP, SMTP, LDAP / SAML), browser automation (e.g. Selenium, WebDriver), and deployment infrastructure (e.g. Jenkins, Artifactory).

Check out Testcontainers modules to get new testing ideas, and keep in mind that Testcontainers libraries also work with any container image!

3

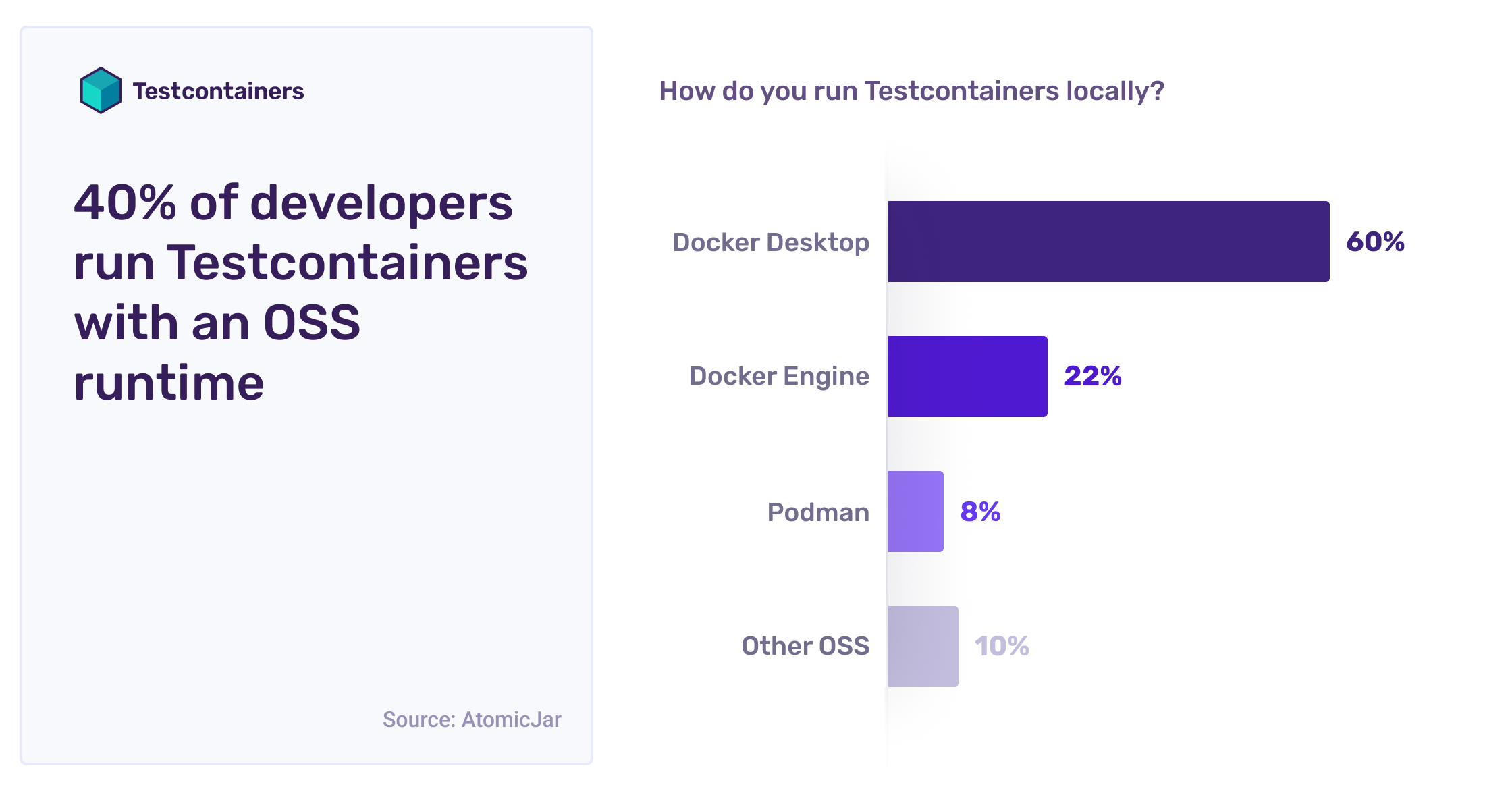

40% of developers run Testcontainers with an OSS runtime

90% of the Testcontainers community runs tests locally on their machine, either as they write code or after writing new tests. For desktop users, half run their Testcontainers-powered automated tests “several times per day” while coding. This illustrates how Testcontainers helps shift tests left from staging or CI to the inner development loop on desktop.

Interestingly, Testcontainers adoption seems to abstract away the details of the underlying container runtime. Besides users who rely exclusively on Testcontainers Cloud, 60% of desktop users run Docker Desktop while 40% have switched to an OSS runtime such as Podman or Rancher Desktop.

Nearly half of desktop users are on macOS, including three out of four on the latest ARM/M1 chip. Another 30% of the community is on Windows, usually via the Windows Subsystem for Linux (WSL).

Would you like to give Podman or Rancher a try? With the free Testcontainers Desktop app, you can easily switch your runtime. And if your desktop runs on Apple silicon or on Windows, switch to Testcontainers Cloud to seamlessly run your containers on x86 and Linux, just like in prod.

4

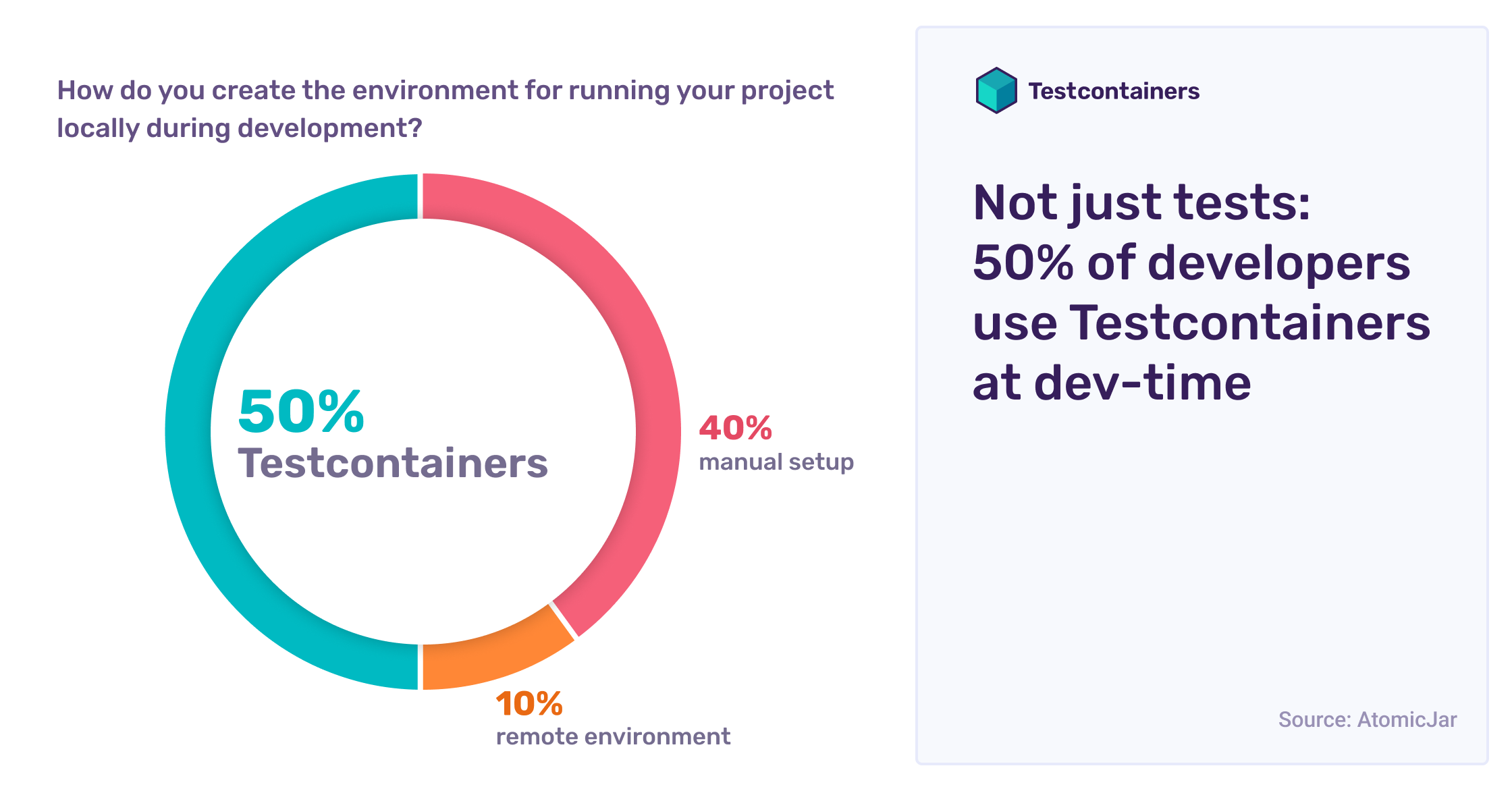

Not just tests: 50% of developers use Testcontainers at dev-time

In addition to automated tests, half the community now relies on Testcontainers to provision dev-time local dependencies directly from their code. This is particularly true for the Testcontainers Java community, thanks to framework-level support in Spring Boot, Quarkus, and Micronaut. For other Testcontainers languages, the percentage is lower at 35%, though advocacy for local development practices is picking up (e.g. local development in Go).

Within the other half of the community, 40% rely on a manual setup with docker, docker compose, or static dependencies. The remaining 10% rely on a remote setup such as a shared pre-prod environment, a pre-prod kubernetes clusters, or sometimes directly on production services.

When something goes wrong with their Testcontainers dependencies, 30% of users simply write more Testcontainers-based tests to troubleshoot! The other 70% primarily rely on out-of-code debugging techniques, such as reviewing container logs (80% of the time), connecting a local debug tool or IDE plugin (40%), or opening a shell within a running container (30%).

Testcontainers Desktop lets you set fixed ports to easily connect your debug tools and freeze containers to prevent their shutdown while you debug. Both features combine to make the debug approaches above much easier. Give it a try!

5

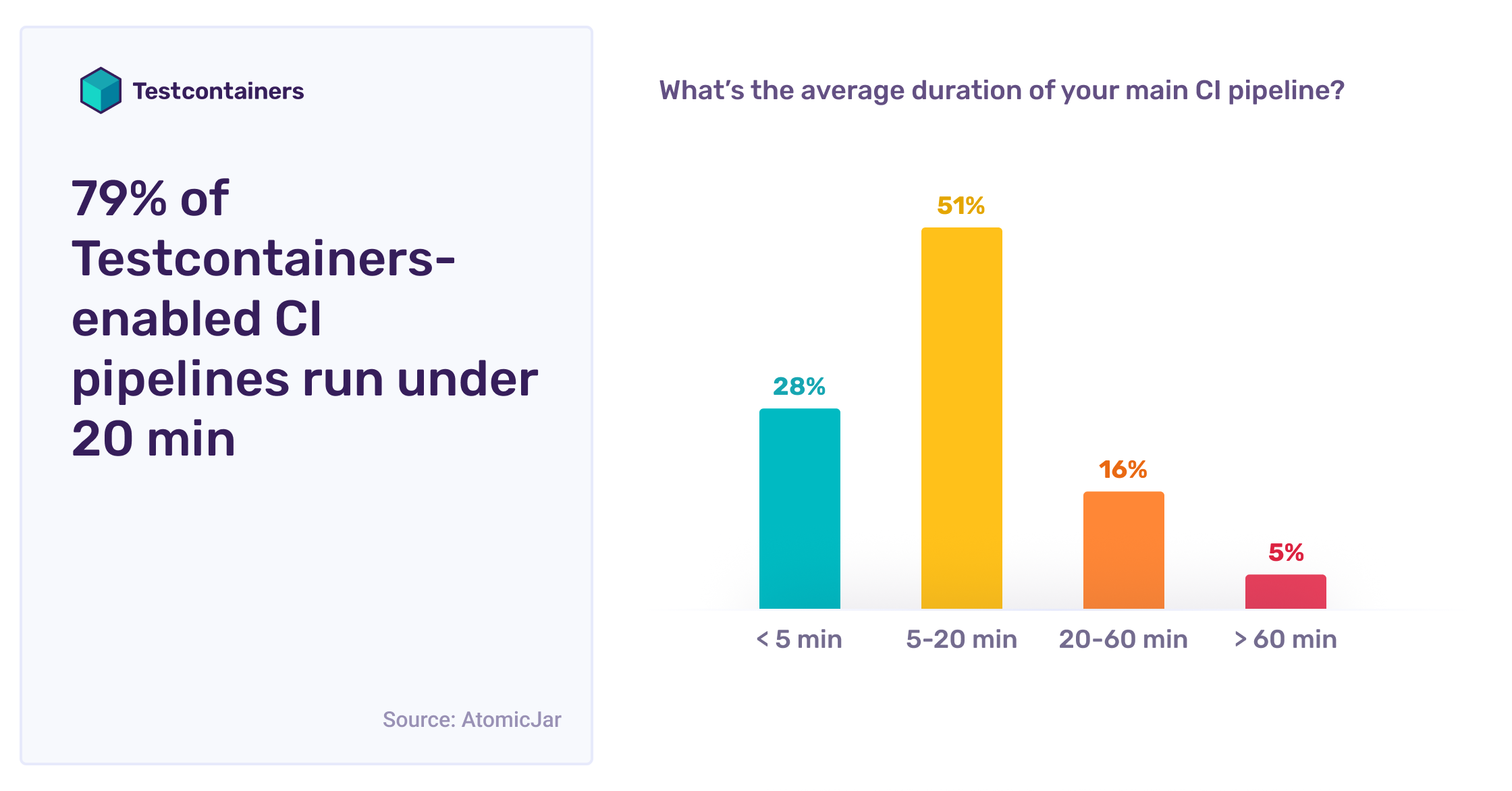

79% of Testcontainers-enabled CI pipelines run under 20 minutes

90% of Testcontainers users run their tests in their CI, typically on all pushes (70%) but sometimes only for the main branch or on-demand (20%).

Thanks to Testcontainers libraries, integration tests are both reliable and quick. For more than a quarter of the community, CI pipelines run under five minutes, and they run between five and twenty minutes for the other half of the community.

Besides users who rely exclusively on Testcontainers Cloud, one in four users relies on a pure SaaS setup to run Testcontainers; the other 75% instrument their CI manually via Docker in Docker (DinD), self-hosting their own CI workers, or by mounting a remote Docker daemon.

Overall, GitHub Actions, Jenkins, GitLab, and Azure CI CD Pipelines are the four most popular CI platforms, with more than 90% of CI users relying on one of them.

If you’d rather not tackle the configuration problems and security concerns of running Docker-in-Docker or a privileged daemon in your CI, check out Testcontainers Cloud. You even get multiple cloud workers to execute your test suites in parallel, without needing to scale CI runners.

6

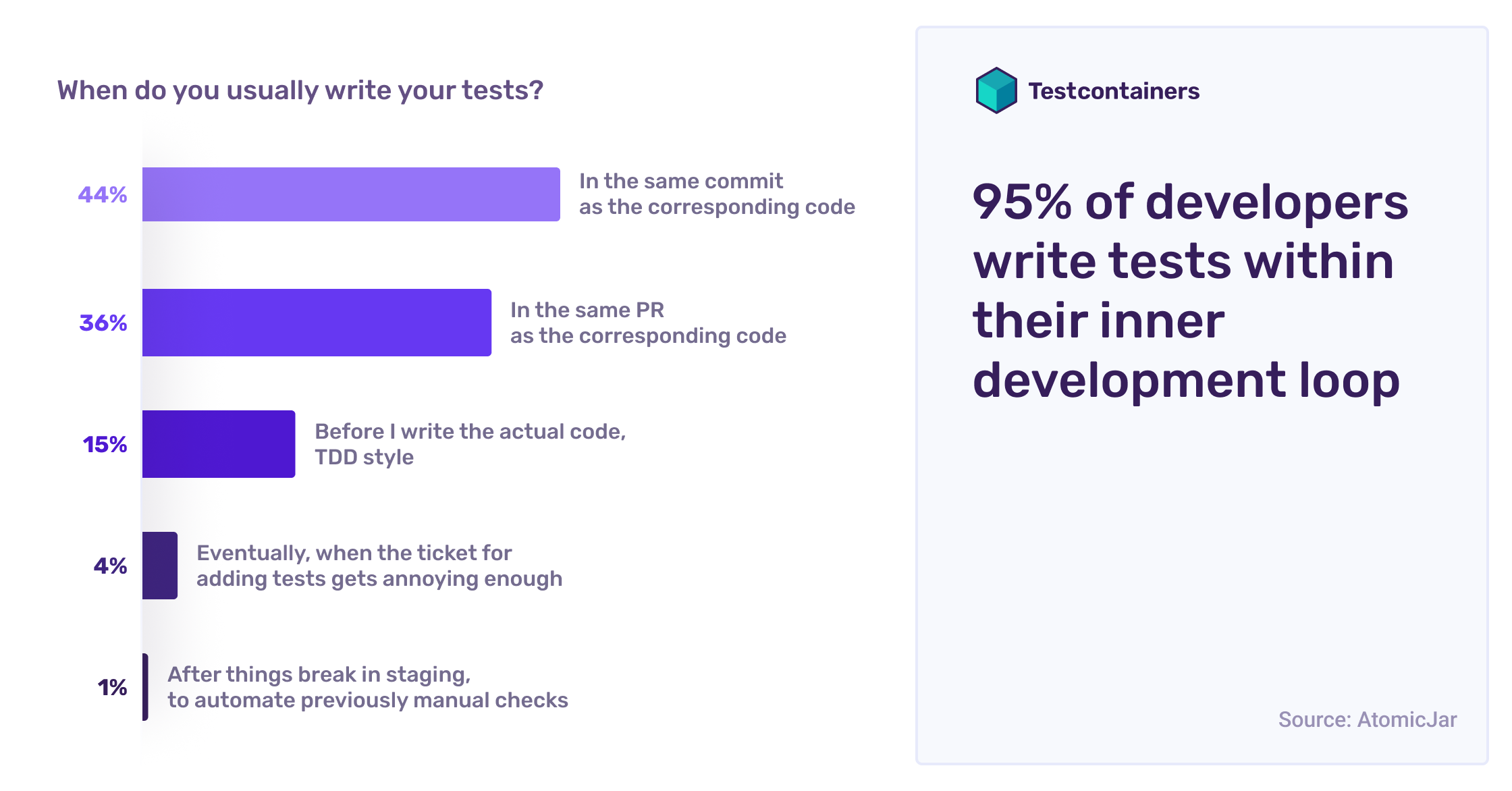

95% of developers write tests within their inner development loop

Testcontainers libraries make it easy to write integration tests as part of the inner development loop. In fact, 95% of the community reports writing tests alongside their actual code. Test Driven Development (TDD) practitioners, who write their tests first, account for 15% of the community, while the remaining 80% write the tests within the same commit or PR.

Tabs vs. spaces? Vim vs. Emacs? Some choices are polarizing. The Testcontainers community is split 50-50 on whether to run the whole test suite while developing, or only the tests related to the particular code they’re working on.

When writing tests, 55% of the community targets explicit quantitative test coverage goals, with target coverage generally situated between 75% and 90%. Within the remaining 45% without explicit quantitative targets, 20% report that focusing on covering all happy paths or on end-to-end tests has proven more useful.

To learn new testing practices and stay up-to-date with product and OSS news, consider subscribing to the Testcontainers newsletter. We send out monthly roundups with new guides, upcoming webinars, and more, directly to your inbox!

7

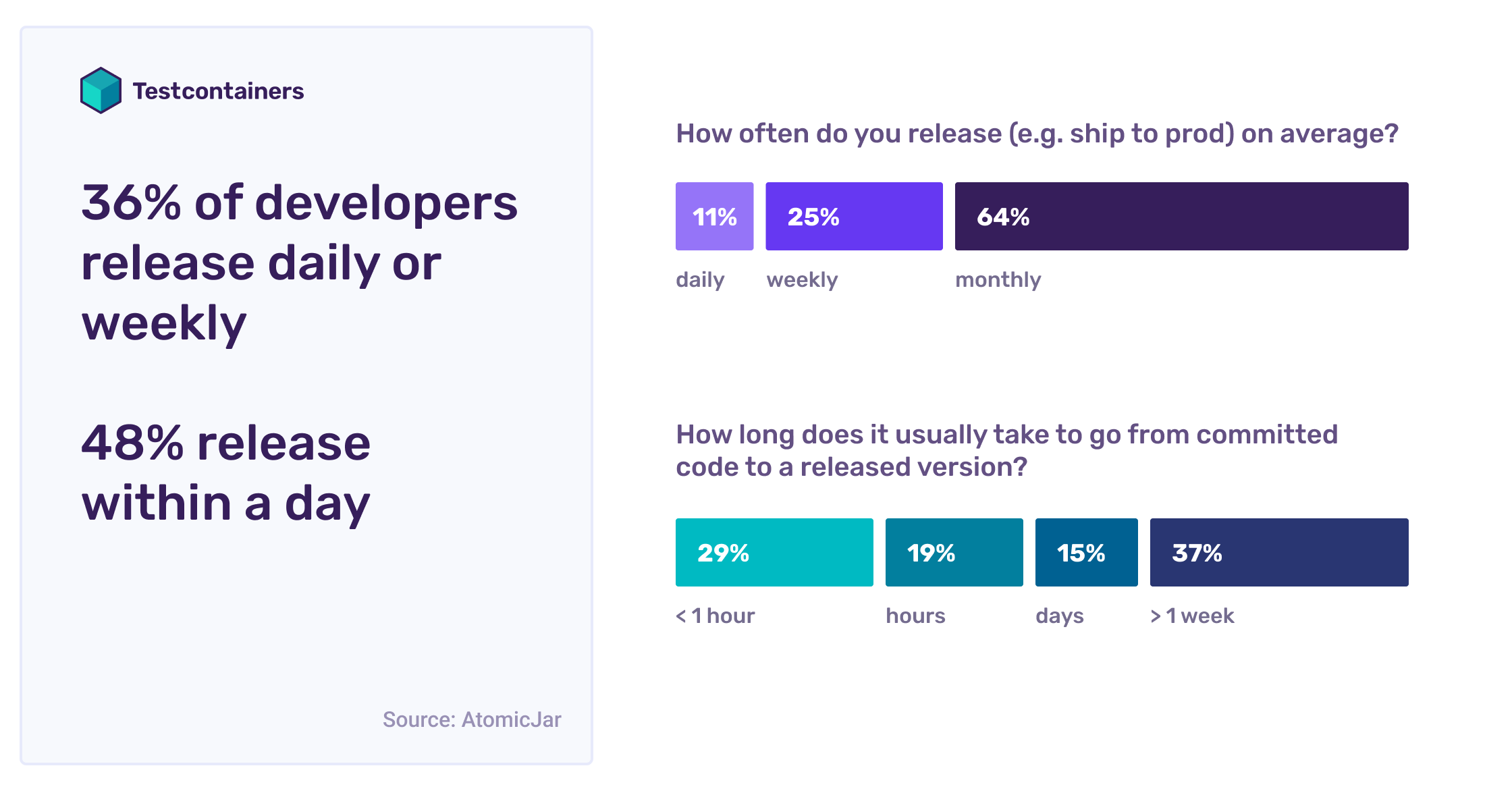

36% of developers release weekly; 48% release within a day

A third of the community ships to production at least weekly, including 10% who ship several times per day. For the rest of the community, things vary more wildly, especially when it comes to shipping libraries or frameworks rather than an application.

48% of the community releases in half a day or less, including 29% who go routinely from committed code to a released version in under an hour. Releases take several days or weeks for the other half of the community.

For four out of five developers, shipping still involves some manual checks in a staging environment: this is typically a permanent environment, though 5% rely on per-release ephemeral environments. Fewer than 20% rely on continuous delivery to production (sometimes as a canary) after automated tests pass.

While having a staging environment doesn’t necessarily imply slower releases, getting rid of it certainly seems to speed things up. For those who have invested in fully reliable and automated integration tests and enabled continuous deployment straight to production, the percentage who achieve releases in less than half a day jumps from 48% to over 81%.

If you’d like to gain confidence in your automated tests and get closer to getting rid of manual checks in your staging environment, check out our Testcontainers guides. Just clone the repo, follow along, and learn by getting your hands dirty!