The end of the year is a great time to reflect on the past, present, and future. For us at AtomicJar, 2021 was nothing but exciting. It will forever remain in our history as the year, the year when we founded the company, the year when we released the first version of Testcontainers Cloud to our private beta users, the year we became confident that the future of developer-focused integration testing is joyful and bright!

And while we are heads down building Testcontainers Cloud and releasing it to the public, we couldn’t avoid noticing a lot of trends that are going on in the devtools ecosystem, and most of them have found their place in our product too.

Most of the recent developments in devtools made us believe that we are approaching a new era, DevTools 2.0 if I may, similar to how advances in technologies made Web 2.0 a thing.

You may ask “what makes it 2.0?” And the answer is… everything! (but please keep reading 😀)

Today, we are going to look into some of the trends in the devtools space and make predictions about how they will evolve in 2022.

The era of DevTools 2.0 is… cloudy

As a developer, I used many devtools in the past, but almost all of them were… local.

IDE? Local. Build system? Local. Profiler? Local. Even some exceptional tools such as JRebel were local. And while everything I created ended up being deployed to clouds, my development environment never benefitted from the power of cloud environments. But that changed, and it changed so fast!

And it is hard to say that the idea of cloud-powered devtools wasn’t on the surface! In fact, it was something forgotten. Every time someone mentions a novel idea of using a thin client to connect to a remote destination that is much more powerful, I cannot stop thinking of… terminals and mainframes.

Why were thin clients or “terminals” used in the first place? Because it would be expensive to give every operator a powerful machine to perform the computations, plus they would require a lot of space and most probably be under-utilized.

But what happens when you connect multiple clients to the same mainframe? Now everyone has a “tiny” (he-he) device in front of them that allows them to perform massive computations with their CPUs with whopping 10s of MHz!

Fast forward to today, we have millions of developers working on their laptops, burning their knees while waiting for something to be indexed or compiled locally. Their requests are growing, too. 32Gb of RAM is a must, “Intel i9? Can I get i11?”, and it should also weigh less than 2kg because despite working from the same sofa for two years straight, we still value mobility.

And while some hardware progress (such as Apple’s M1 revolution) overcomes some of these limitations, it brings a whole new set of challenges, because our existing devtools are still relying on Intel architecture in many places.

But what happens if we separate our development workloads from the machine we are using for the development? And, most importantly, are we improving or reimagining DevX (“Developer Experience” here and after)? We bet on the latter!

In 2021, we’ve seen the emerging popularity of Cloud IDEs: Codespaces by GitHub, Gitpod, Fleet by JetBrains, and more! They all share the same concept – the developer’s machine does not have to be the one that indexes the code, compiles the apps, runs them, executes the tests, and so on. But a terminal that would instruct the development environment to perform these actions.

This dramatically reduces the requirements for development machines while also bringing other advantages, such as the reproducibility of environments, isolation, environment sharing (I hope to see more of it in 2022!), and of course portability of your setup – imagine starting coding from a thin terminal at McDonald’s, then continuing coding while on a bus from your iPad, and then finish off your work from home, all with exactly the same environment? I wish we could do the same for physical office objects! (portable Herman Miller chair, anyone?)

But this is only the beginning. More and more devtools are moving to the cloud.

Why download the base Docker image, use up CPU and RAM (and root privileges too!) to build a Docker image locally if you can delegate it to Google Cloud Build?

Why build and test locally in IntelliJ IDEA if you could submit it to a cloud-hosted version of TeamCity? (Oooops! That one existed for 15 years 🤓 Just IMO the world wasn’t ready for it yet!)

And of course, why start Docker containers for integration testing locally if you could run them in a cloud with Testcontainers Cloud!

Since switching to the M1 MacBook Air for my open source work, I’m really leaning on my remote dev machine, a VM running in GCP, and Cloud Build as part of my developer workflow. Access to a native Linux environment is absolutely critical.

— Kelsey Hightower (@kelseyhightower) February 2, 2021

What’s interesting though is that DevTools 2.0 will be focused on all these tools working together, each in their niche. Cloud IDEs alone would not solve everything. They can try, but I guess that the best Cloud IDEs will be marketplaces with lots of integrations for various use cases.

Cloud IDEs give you what they are designed for – an IDE (what a surprise!), but, should you need to build an image, perform a security scan of the dependencies with Snyk, or run your Testcontainers-based tests, there will be integrations, similar to plugins in local IDEs.

And these “for purpose” integrations will make the whole combo very efficient! Your Cloud IDE can be a small instance (1 CPU, 1GB of RAM), just enough to perform code editing, but then, once you need to run your tests, for example, Testcontainers Cloud would spin up these heavyweight containers for you. Difference? It will do it on demand, serverless-style, shut them down if you need to do a long coding session without running your tests for 2-3 hours so that you don’t need to over-provision your Cloud IDE resources for something that you run 20% of your development time.

And even if you are not using Cloud IDEs, these cloud versions of your favourite devtools will change how you work locally.

DevTools 2.0 are living on the edge

One big difference between cloud-hosted vs. local devtools is that the network latency comes into play. As much as we would like to, we can never reduce it to zero. We need to accept that, as soon as we move the workloads from our machines to clouds (a.k.a. someone else’s machines), there will be network latency. And it may not be super critical in some cases (for example who cares if autocomplete suggestions appear in 20ms or 100ms?), but very important in others.

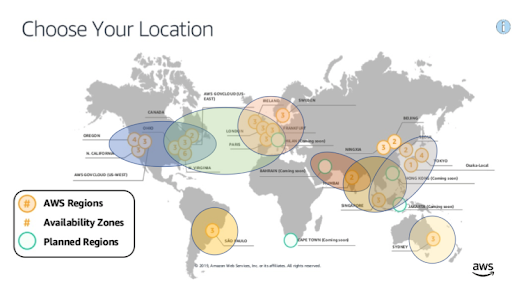

And while modern cloud providers give a decent global coverage with their datacenters, they are still very regional. We expect to see more AWS Local Zones and similar services that would allow bringing compute resources closer to the users while not sacrificing the convenience of AWS interfaces (think EC2). In that regard, some cloud-based devtools are similar to Cloud Gaming: not only do they run the workloads remotely, but they also need to ensure that the latency won’t ruin the experience.

There has been a lot of progress in Edge Functions where one can deploy JavaScript or recently WebAssembly to edge locations (Cloudflare Workers is a great example). However with devtools we often need to deploy not just code, but containers or sometimes even VMs.

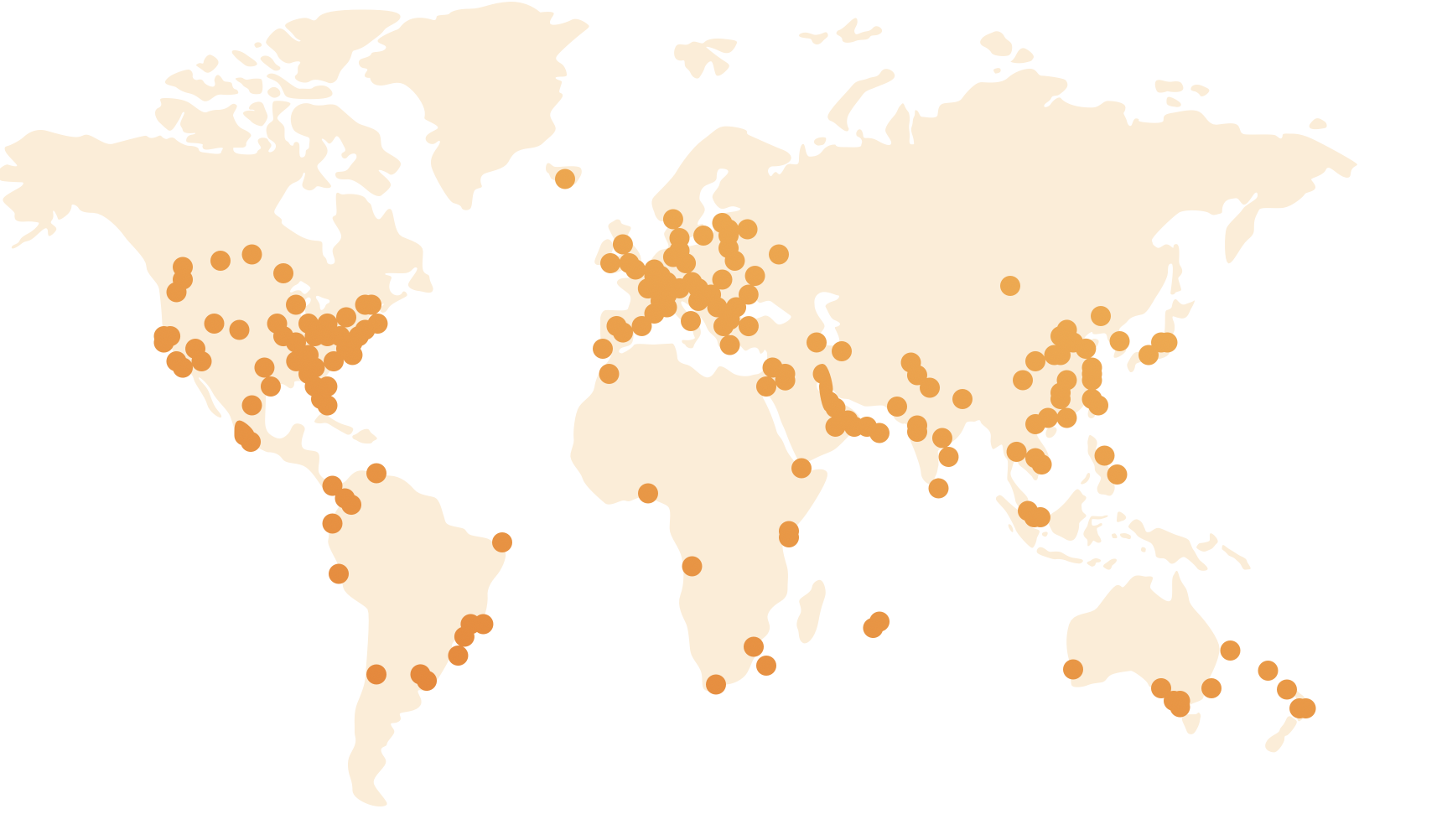

And while Cloudflare’s global edge network looks like this:

AWS’ and other clouds’ compute locations cannot boast of a similar coverage:

This might be enough to build a globally distributed Cassandra cluster, for example, but can fail to provide equally fast services to the users no matter where they are located in the world (I feel bad for people in Alaska or Siberia when I look at both maps!)

We at AtomicJar know this topic very well – Testcontainers Cloud is a globally distributed deployment that brings workloads closer to the users to provide the best experience. Currently, we occasionally rent compute resources from local utility computing providers (#SupportLocalBusinesses). But we foresee a new category of infra startups focused on providing Edge Computing as-a-Service, for this new generation of SaaS products that want to offload something from users’ computers but not too far away from them.

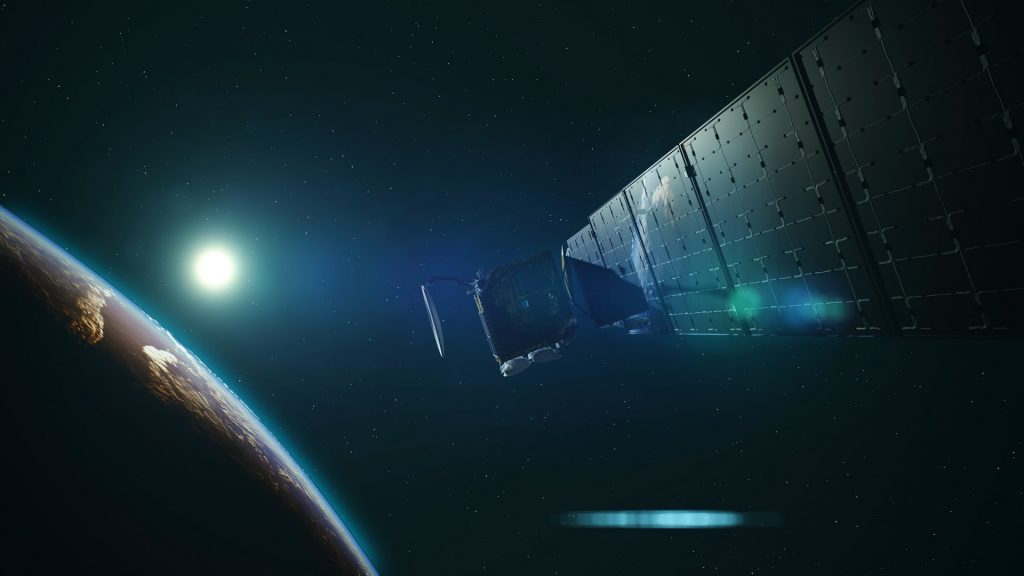

And, since we are talking about the future, while it may not happen in 2022, we very much look forward to having Satellite Computing! If we can have a stable internet connection with Starlink to watch a funny video of a cat from YouTube by sending a packet to the orbit, having it sent back to a datacenter located on Earth, and then receiving a response back, why don’t we reduce the roundtrip… and host datacenters on the orbit? Bonus: Satellite datacenters that serve low-latency requests to the users under them vs following their geographical location, so that the same satellite would serve multiple timezones to avoid being underloaded in non-peak hours.

Sounds crazy and not real? Go check Microsoft Azure Space!

DevTools 2.0 will be watching you… and this is great!

Before you start panicking – don’t worry, there is no Big Brother Developer who will always keep an eye on how often you copy code from Stack Overflow or ask GitHub Copilot to implement a complex algorithm for you. But an emerging category of CI & Test Observability – a new and exciting focus for APMs who previously were aimed at production environments but now shifting it to the left (well, who doesn’t in DevTools 2.0, right?). Be it our friends Thundra, or Elastic APM, or many other vendors.

Why do they do it? Because now that hiring is harder than ever, companies worry a lot about developers’ efficiency and how to scale engineering teams vertically, not horizontally. Should you, as a developer, worry about it? Absolutely not! In fact, you should be happy. Have you ever had to explain to your manager that you need tool X to be more productive with Y? Now imagine having the same conversation but with some real numbers behind it, and not only for you but for the whole team. This may actually change how DevX improves at teams. Think about it as a performance profiler for your engineering process. Yes, you can optimize things by looking at code, but it is easier to optimize when your profiler tells you about the hotspots that demand optimization!

How do they do it? By not reinventing the wheel, but using existing technologies, of course! One such enabling technology is OpenTelemetry – a widely popular API for instrumenting, generating, collecting, and exporting telemetry data (metrics, logs, and traces) to help you analyze your software’s performance and behaviour. And yes, that applies to your development process as well.

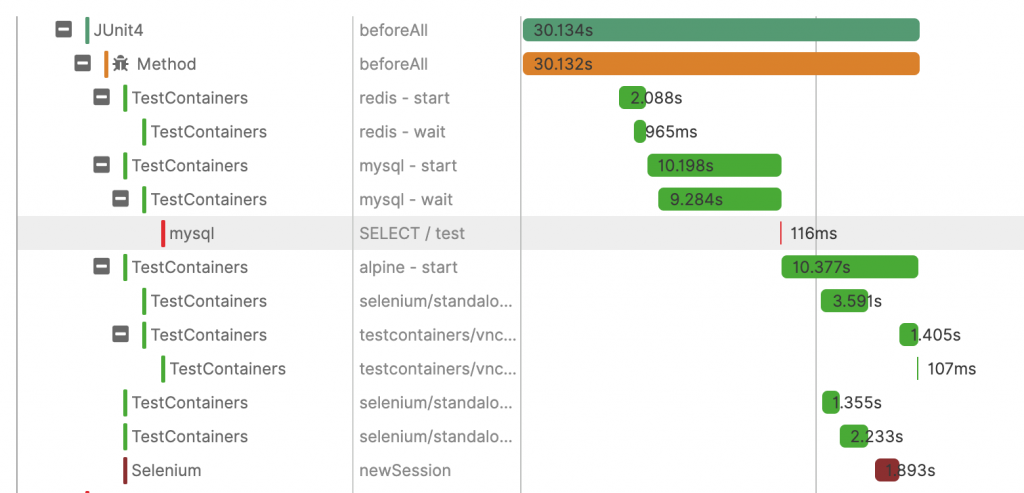

Ever wanted to know what you can optimize in your test suite? With fine-grained visibility up to answering questions like how long does it take for container X to start”? Or what parts of the build take the most time? Some of it was previously available per case (e.g. Gradle Build Scan to analyze your Gradle builds), but now we are talking about an effort to standardize all of it, and be able to see the performance picture of your development processes, not just software.

And while some solutions such as Thundra instrument Testcontainers automatically, we are also looking forward to jumping onto this exciting train and considering adding OpenTelemetry instrumentation to Testcontainers’ code, so that any OTel-compatible tool will be able to analyze its execution, giving you a great level of visibility into your test setup and helping to identify potential optimizations!

DevTools are getting a helping ARM

In 2021, Apple M1 laptops drove a revolution comparable to what iPhone did for smartphones. Nobody would argue that in 2021 alone more projects integrated with ARM than IMO in all past years. And we think this is a revolution because it triggered attention because the questions changed from “should I try ARM?” to “why not?”, with some great success stories coming from Liz Fong-Jones and others.

What makes ARM especially great for devtools is that it gives a great balance between the cost, performance, and energy efficiency (let’s not forget about the impact we are making on our planet by running all these under-utilized CI nodes!). And while some popular technologies are lagging behind and would require a large amount of work (e.g. Kafka’s Docker image), it makes a lot of sense to start saving costs and migrating your local and CI environments to ARM while using Testcontainers Cloud and other similar devtools to run Intel-based workloads.

That’s the beauty of DevTools 2.0 – if previously we were running a single over-provisioned Jenkins worker x86 node, we now can run lightweight ARM workers that would delegate tasks to other tools. That includes some crazy scenarios where a CI worker would run Gradle, which would massively parallelize the build by using Test Distribution, and each Gradle worker would also be a small and efficient ARM instance that does not require Docker thanks to Testcontainers Cloud!

Developers are the kings

And here’s my last prediction, which isn’t really a prediction, but a statement: developers are the kings!

With the global shortage of developers, hiring more developers is no longer an easy solution for companies of any size. But making existing developers happy and more productive is.

And, as in any gold rush, the sellers of shovels and throne manufacturers will benefit from it the most from these upcoming changes. 😀

Especially those who make comfortable thrones, and spent enough time understanding what makes a decent shovel, not just someone who pivoted to making tools because they noticed a demand.

And, as a developer myself, I can only recommend to never undervalue your devtools, the thrones you will be sitting on, because you will spend a lot of time with them, and, as Kelsey Hightower said, the top investment pick for 2022 is… yourself.

Happy New Year,

Sergei